1. Introduction

The process to read JSON files from AWS S3 folders using Informatica cloud (IICS) is different from reading JSON files from Secure agent machine. We have discussed in detail the process to read JSON files from secure agent machine in our previous article. In this article let us discuss the step-by-step process to read a JSON file from AWS S3 bucket and also discuss the process to read multiple JSON files with same structure using Indirect loading method.

2. Reading a JSON file as input from AWS S3 bucket

For the purpose of demonstration Consider the following as the source JSON file data which we want to read and parse through Informatica Cloud.

Contents of author.json file.

[

{

"AUTHOR_UID": 1,

"FIRST_NAME": "Fiona",

"MIDDLE_NAME": null,

"LAST_NAME": "Macdonald"

},

{

"AUTHOR_UID": 2,

"FIRST_NAME": "Gian",

"MIDDLE_NAME": "Paulo",

"LAST_NAME": "Faleschini"

},

{

"AUTHOR_UID": 3,

"FIRST_NAME": "Laura",

"MIDDLE_NAME": "K",

"LAST_NAME": "Egendorf"

}

]METHOD-1: Using Native S3 Connection properties

In the Source transformation, select the Amazon S3 v2 Connection and JSON file you wanted to parse as the source object with input file format as Json.

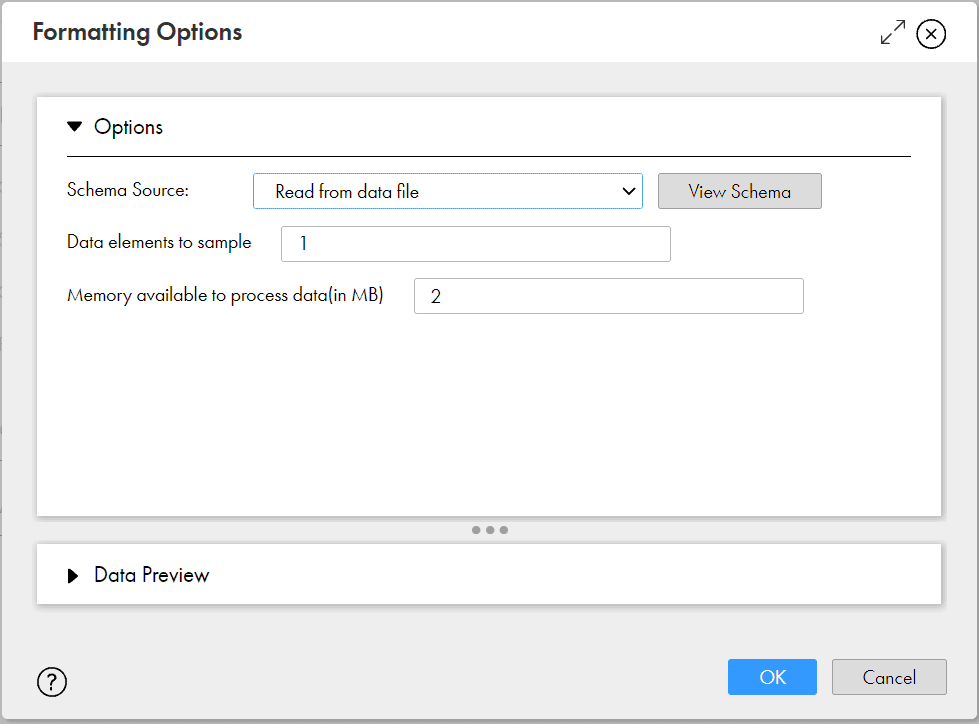

Under Formatting Options, select the Schema Source as Read from data file and other options with default values. This way we are asking the Informatica to understand the hierarchy from source file without passing any schema file for reference.

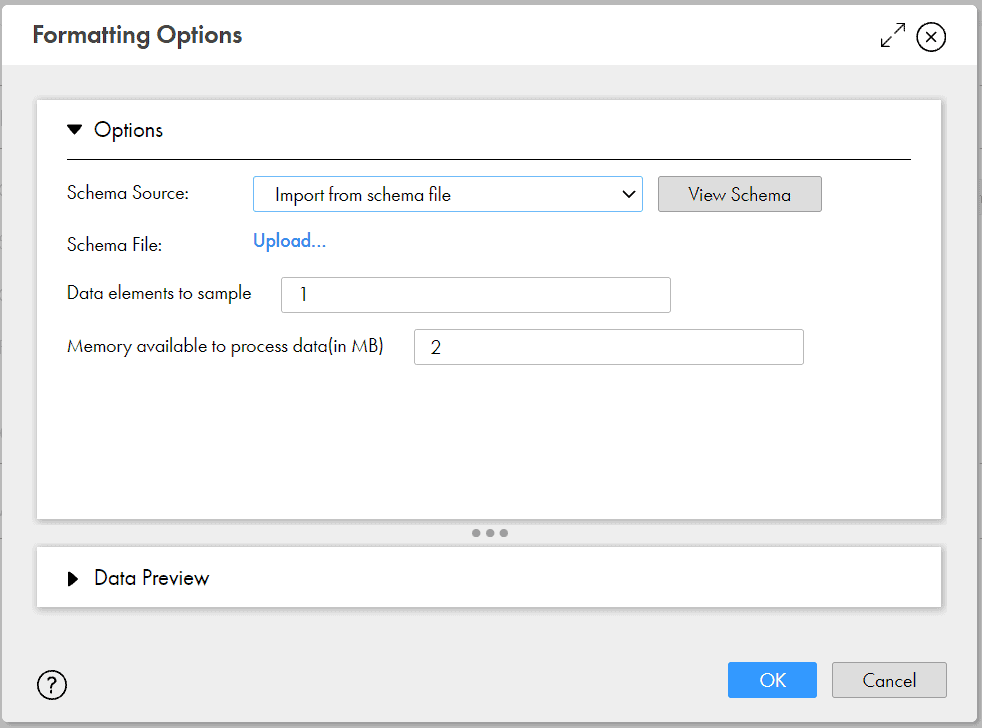

Alternatively, we can upload a schema file for Informatica to compare and parse the source file by selecting the Schema Source as Import from Schema file.

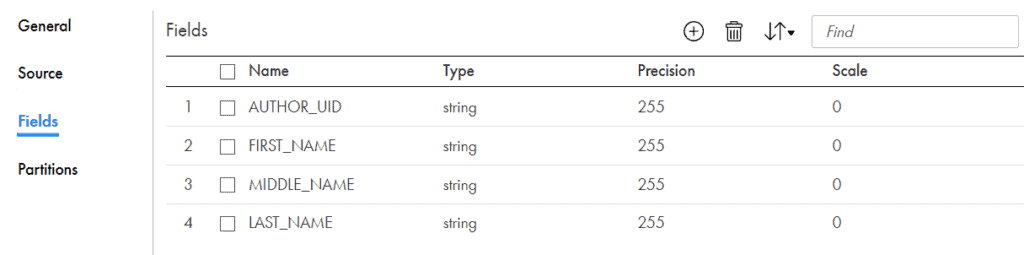

Under Fields tab of source transformation, we can see the relational fields created by Informatica data Integration by parsing JSON file.

Pass the relational source fields to downstream transformations to further transform as per requirement and load into target.

METHOD-2: Using Java and Hierarchy Parser Transformation

Step1: Create a template file for Hierarchical Schema

Based on the structure of your JSON file, prepare a sample file which defines the schema definition of your JSON file.

From our source data, we can see that it contains array of author details. Each author element in the array consists of 4 attributes – AUTHOR_UID, FIRST_NAME, MIDDLE_NAME, LAST_NAME and their corresponding values.

Below template defines the structure of our JSON file.

[

{

"AUTHOR_UID": 999,

"FIRST_NAME": "f_name",

"MIDDLE_NAME": "m_name",

"LAST_NAME": "l_name"

}

]Step2: Create a Hierarchical Schema

Create a Hierarchical Schema using the template file created in the earlier step.

To create a Hierarchical Schema, login to Informatica Cloud Data Integration > Click on Components > select Hierarchical Schema > click Create.

Enter the name and description of Hierarchical Schema. Select the JSON sample file created in earlier step which contains the hierarchical structure of source data. Validate and Save the Hierarchical Schema.

Step3: Create a Mapping to read JSON file

I. Configuring Source transformation

In the Source Transformation, select the Amazon S3 v2 Connection and JSON file which you wanted to parse as the source object with input file format as None.

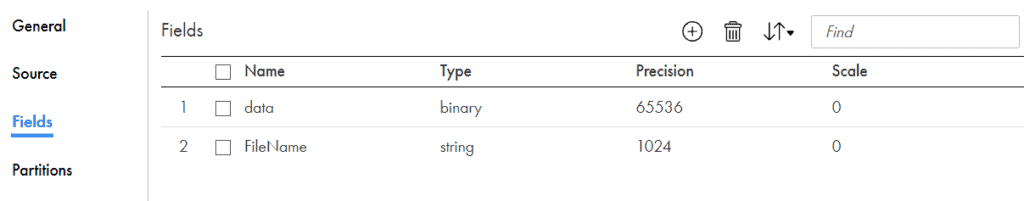

Under Fields tab of Source transformation, you can see two fields. The entire JSON file contents are stored into a single field named data of type binary. The JSON file name information is stored into field named FileName of type String.

In order to convert the binary data to string, Java transformation is used.

II. Configuring JAVA transformation

Pass the data from source transformation to Java transformation.

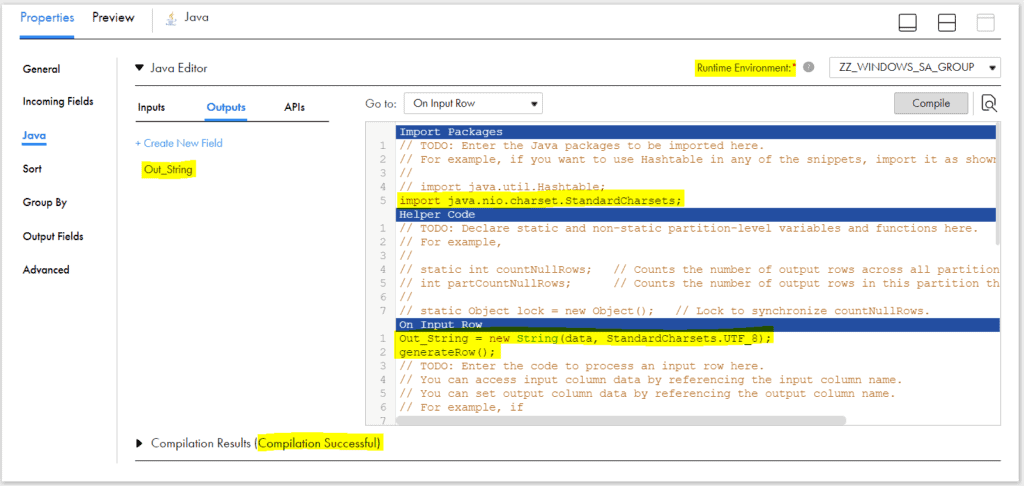

Under Java tab of the transformation, navigate to Import Packages under Go to section.

Under Import Packages of Java Editor enter below text to import java package which translates between bytes and Unicode characters.

import java.nio.charset.StandardCharsets;Select On Input Row under Go to section. Under Outputs tab, create a new field of type string to hold the data converted from binary. Ensure proper precision for the field based on your input data, else data truncation would occur.

Under Input Row section of java editor, provide the conversion code as below which converts the binary data to string and loads into field Out_String created in earlier step.

Out_String = new String(data, StandardCharsets.UTF_8);

generateRow();

Select the Runtime Environment and click on Compile.

Pass the data to Hierarchy Parser transformation once compilation is successful.

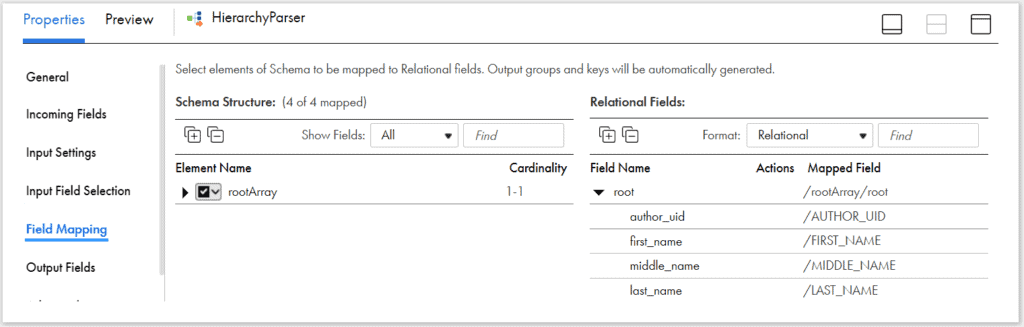

II. Configuring Hierarchy Parser transformation

Under Incoming Fields tab of Hierarchy Parser transformation, include only string output from Java transformation and exclude other fields.

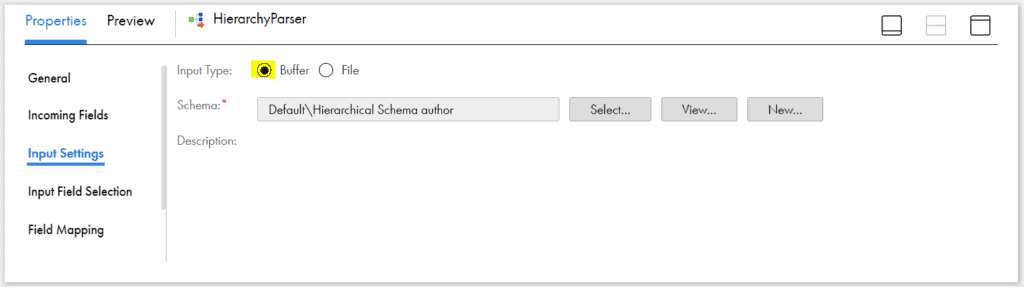

Under Input Settings tab, select the Hierarchical Schema created in earlier steps. Select Input Type as Buffer.

Buffer mode should be selected when the JSON/XML data is in the incoming source column. File mode should be selected when the JSON/XML data is directly read from a file.

Here we are reading JSON data in the form of a field and hence Buffer mode is selected.

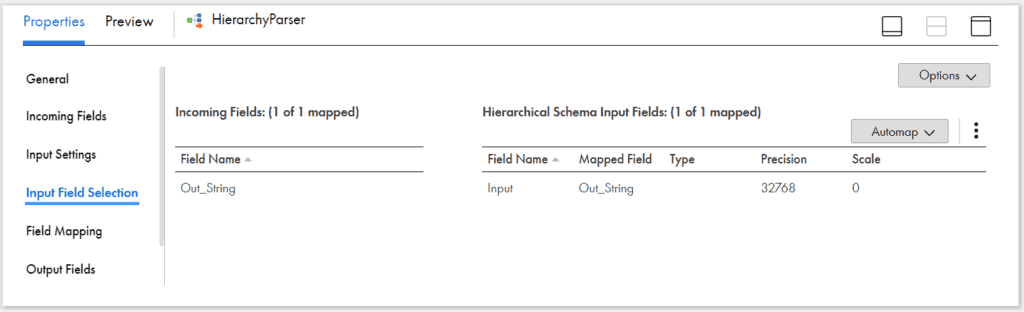

Next under Input Field Selection tab, map the incoming field from Java to the Hierarchical Schema Input Field.

Under Field Mapping tab, map the elements from rootArray to target as required.

The above shown field mapping implies that now each author element from JSON file will be converted into a single relational output row with column names as AUTHOR_UID, FIRST_NAME, MIDDLE_NAME and LAST_NAME

III. Configuring Target transformation

Pass the data from Hierarchy Parser to a target transformation.

If your data set contains multiple output groups, map them to appropriate downstream transformations and join them before passing to target transformation. In our example, we have only one output which will be mapped to target.

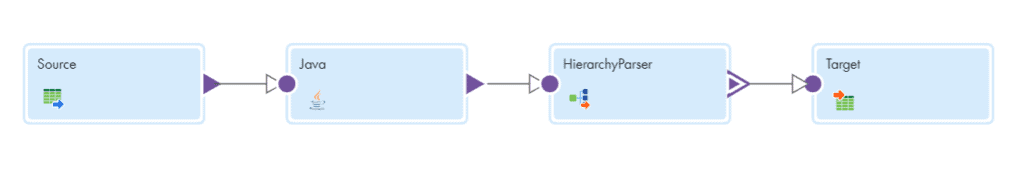

The final mapping will be as below.

Save and run the mapping. The final output we get will be as below.

"author_uid","first_name","middle_name","last_name"

1,"Fiona",,"Macdonald"

2,"Gian","Paulo","Faleschini"

3,"Laura","K","Egendorf"3. Reading multiple JSON files as input from AWS S3 bucket – Indirect Loading

In our previous article, we have discussed in detail how to perform Indirect loading of AWS S3 files using manifest files. Please refer the linked article for more information.

In order to read multiple JSON files as input in a mapping, create a manifest file and pass it as source.

Use the below format to prepare a manifest file.

{

"fileLocations": [{

"WildcardURIs": [

"directory_path/filename*.json"

]

}, {

"URIPrefixes": [

"AWS_S3_bucket_Name/"

]

}],

"settings": {

"stopOnFail": "true"

}

}Select the manifest file as the input in source transformation instead of JSON files with input file format as None.

The rest of the procedure is same as METHOD-2 explained in above sections of article. Pass the data to Java transformation. Import required packages to convert binary data to string and pass it to hierarchy parser transformation to parse the JSON input to a relational output.

4. Conclusion

The Secure Agent requires a Java Development Kit (JDK) to compile the Java code and generate byte code for the transformation. Azul OpenJDK is installed with the Secure Agent, so you do not need to install a separate JDK. Azul OpenJDK includes the Java Runtime Environment (JRE).

Though AWS S3 v2 connection natively supports reading JSON files, there are limitations in reading complex JSON files. Hence to process complex JSON files, the best way is through Java transformation.

Refer the requirements and limitations in performing the Indirect loading of files from S3 bucket folders from linked article in above section.

Related Article: Indirect File Loading in Informatica Cloud (IICS)

Subscribe to our Newsletter !!

Related Articles:

Learn how to read a JSON file in IICS using Hierarchy Parser transformation. Understand Hierarchical Schema and multiple output groups in JSON

Learn how to create a JSON file in IICS using Hierarchy Builder transformation. Understand how to prepare source data to create JSON file

Learn how to read a Fixed-Width file in Informatica Cloud. Understand how to create and use a Fixed-Width File Format component.